In the rapidly evolving digital landscape of 2026, the proliferation of Large Language Models (LLMs) has made automated content generation a standard practice. However, a significant challenge remains: AI-generated text often carries a distinct “robotic” signature—characterized by uniform sentence lengths, predictable word choices, and a lack of emotional resonance. For developers building content platforms, SEO tools, or communication apps, this “uncanny valley” of text can lead to poor user engagement and flagging by AI detection systems.

The solution lies in a new class of middleware: the AI text humanization API. These tools do more than just swap synonyms; they fundamentally restructure text to mimic the rhythm, flow, and nuance of human thought. This guide explores how developers can integrate these powerful tools to enhance content authenticity at scale.

Understanding the Core Architecture of Humanization APIs

Before beginning the integration process, it is essential to understand what an AI text humanizer API actually does. Unlike basic paraphrasing tools, a true humanizer analyzes the “perplexity” and “burstiness” of text.

- Perplexity: This is a measure of how predictable the text is. AI models strive for high-probability word sequences, which results in low perplexity. Humanizers introduce “planned randomness” to increase this score and make the text feel more spontaneous.

- Burstiness: This refers to the variation in sentence length and structure. AI tends to produce sentences of very similar size, while humans naturally “burst” mixing long, complex clauses with short, punchy statements.

By modifying these statistical patterns at a structural level, the API transforms a dry, mechanical paragraph into something that feels organic and relatable.

How to Integrate an AI Text Humanization API: The Workflow

Integrating a humanization service into your application’s ecosystem is generally straightforward, as most providers offer standardized endpoints that communicate via common data formats. Below is the conceptual workflow for a successful implementation.

1. Authentication and Security Setup

The first step in the integration journey involves securing your access. Most modern services utilize secure token-based authentication. As a best practice, developers should store these credentials in secure environment variables rather than within the application’s logic. This ensures that sensitive keys are not exposed in version control systems while allowing the application to verify its identity with the API provider on every request.

2. Defining the Request Structure

The humanization process typically functions as a direct data exchange. Your application sends a request containing the raw AI-generated text along with specific parameters. These parameters often include “intensity” levels or “tone” settings (such as professional, casual, or creative). This flexibility allows the developer to tailor the output to the specific needs of the end-user, ensuring the humanized text fits the intended context perfectly.

3. Managing Real-Time Content Streaming

For applications processing long-form content, such as comprehensive blog posts or technical whitepapers, latency can become an issue. To provide a better user experience, many 2026-era APIs support data streaming. This allows your application to receive and display the humanized text piece-by-piece as it is being processed, rather than waiting for the entire document to be finished. This creates a much more responsive interface for the user.

See also: 7 Reasons to Integrate an AI Content Detection API in 2026

Best Practices for Seamless Implementation

To get the most out of an AI text humanization API, consider these strategic approaches to your application architecture:

Implement Efficient Caching Layers

Since humanizing text is computationally intensive and often billed based on the amount of text processed, implementing a caching layer is highly recommended. By storing the results of previously humanized snippets, your application can serve identical requests instantly. This not only improves performance but also significantly reduces operational costs by avoiding redundant API calls.

Graceful Error and Rate Limit Handling

Every API has limits on how much data can be processed in a specific timeframe. Your application should be designed to handle these limits gracefully. If the service is temporarily overloaded, the application should queue the requests and attempt them again after a short delay. This prevents service interruptions for your users during peak traffic times.

Multi-Step Content Validation

Humanization should never happen at the expense of accuracy. For high-stakes applications, developers often chain multiple tools together. This might involve generating a draft, running it through the humanization API, and then passing it through a final verification step to ensure that the core facts remain intact despite the stylistic changes.

Frequently Asked Questions

Does humanizing text affect search engine performance?

Yes, it typically has a positive effect. Search engines in 2026 prioritize content that provides genuine value and reads naturally for human audiences. By removing the repetitive patterns found in raw AI text, humanized content is less likely to be flagged as low-quality automated spam, helping to maintain or improve search rankings.

Can these APIs handle multiple languages?

Most premium APIs now support a vast array of global languages. They are designed to recognize the input language automatically and apply the specific cultural and linguistic nuances required to make that specific language feel authentic and locally resonant.

Is my data kept private during the process?

Reputable API providers maintain strict privacy standards. Many offer “zero-retention” policies, meaning the text is processed in temporary memory and deleted immediately after the humanized version is delivered to your application. This is crucial for maintaining user privacy and data security.

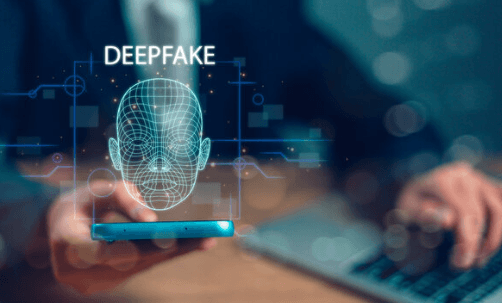

How do these tools interact with AI detectors?

They work by disrupting the mathematical signatures that detectors look for. By intentionally varying sentence structures and choosing less “obvious” words, the humanizer changes the statistical profile of the text, making it indistinguishable from content written by a human author.

Conclusion

Integrating an AI text humanization API is a vital step for any developer looking to bridge the gap between machine efficiency and human relatability. By following a structured integration process and focusing on the nuances of text flow, you can build applications that produce high-quality, authentic content that truly resonates with real people. As AI becomes even more integrated into our daily lives, the tools that help us maintain a human touch will be the ones that define the next generation of successful digital communication.